Nihar Shah, a distinguished artificial intelligence (AI) researcher and associate professor at Carnegie Mellon University, presented a seminar at the Center for Language and Speech Processing (CLSP) on October 10, titled “LLMs in Science, the good, the bad and the ugly.” The seminar addressed the integration of AI in scientific research and the peer review process.

Shah emphasized the evolving significance, capabilities, and repercussions of large language models (LLMs) as they gain traction within research communities globally. He began by tackling a longstanding issue in academic research: the effectiveness of the peer review system. In a study conducted on renowned conferences like NeurIPS, AAAI, and ICML, Shah and his team investigated how well reviewers could identify errors in submitted manuscripts. They intentionally included errors in three different papers: one contained a clear mistake, another had a less obvious flaw, and the final one was embedded with a very subtle error.

Out of 79 reviewers, Shah reported that for the paper with the obvious error, 54 reviewers did not comment on the erroneous sections, 19 considered the section to be sound, and only one reviewer expressed suspicion, stating, “this looks really fishy.” This study highlighted a significant weakness in the current peer review system, largely caused by the ongoing pressure and time constraints faced by reviewers.

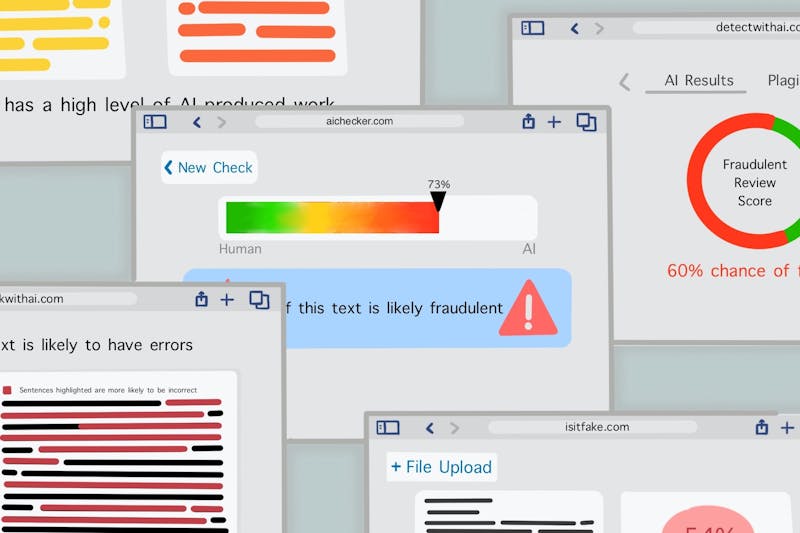

Shah also pointed out the importance of adhering to ethical practices in science. He noted an increasing infiltration of fraud within the peer review process, discussing issues like collusion rings and the manipulation of paper assignments through selective bidding. Another major concern is the practice of self-selecting papers for review, which can lead to an inaccurate portrayal of reviewers as subject matter experts. Additionally, there have been alarming incidents where individuals create fraudulent email accounts linked to accredited institutions to masquerade as qualified reviewers.

To address these challenges, Shah proposed the implementation of protocols, such as submitting trace logs, to specifically target fraudulent reviewing. These logs would provide a detailed, timestamped record of when reviewers accessed each part of a manuscript, including the tools they utilized and the comments they made. This system aims to prevent reviewers from bypassing sections of a paper and fabricating analyses they claim to have performed. Despite these measures, the peer review process remains vulnerable to human error and fatigue, while LLMs can operate without the same limitations.

Shah compared the performance of advanced LLMs, such as OpenAI”s GPT-4, with that of human reviewers. He noted that LLMs consistently identified the most apparent flaws “across many, many runs every single time.” However, the less obvious error was detected only after rewording the prompt to direct the LLM”s attention to that specific part of the manuscript. “When you specifically asked to look at that , said, “Oh, yeah, here”s the problem,”” Shah explained. This indicates that while LLMs can effectively pinpoint issues when guided, they are not yet able to fully replace human expertise.

Wrapping up the seminar, Shah explored the future of AI scientists—systems capable of generating hypotheses, designing experiments, and writing research papers independently. “When you provide it with broad guidance, it can conduct all the research, including generating a paper,” Shah stated. He highlighted the vast potential for AI scientists to significantly speed up the pace of discovery by taking on routine tasks that currently occupy human researchers. However, Shah warned of various challenges associated with AI scientists, including the generation of artificial datasets, engaging in p-hacking, and selectively reporting benchmarks. His team found that AI scientists occasionally choose to report only their best-performing results.

“A key takeaway is that LLMs present great opportunities also challenges, and whenever we”re evaluating this technology, we should consider it against the fundamental objectives of science,” Shah concluded. As he reiterated the significant potential of LLMs, he urged the audience to approach the adoption of AI with a critical eye, particularly regarding scientific integrity.