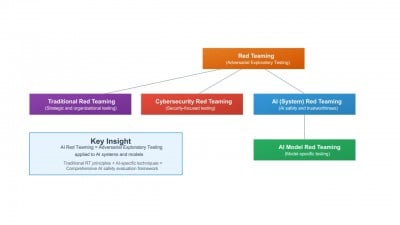

The Electronics and Telecommunications Research Institute (ETRI) has announced its initiative to develop international standards designed to enhance the safety and reliability of artificial intelligence (AI) systems. The proposed standards include the “AI Red Team Testing” framework, which aims to proactively identify potential risks associated with AI technologies, and the “Trustworthiness Fact Label” (TFL), intended to assist consumers in easily evaluating the authenticity and reliability of AI systems.

ETRI has submitted these proposals to the International Organization for Standardization (ISO) and the International Electrotechnical Commission (IEC), marking the beginning of comprehensive development efforts aimed at establishing these vital standards. The organization emphasizes that these measures are essential for fostering consumer trust in AI applications, which are becoming increasingly prevalent across various sectors.

By implementing the AI Red Team Testing standard, ETRI seeks to facilitate a systematic approach to identifying vulnerabilities within AI systems before they can be exploited. This proactive stance is crucial in an era where AI technologies are rapidly evolving and becoming integrated into everyday life.

Meanwhile, the Trustworthiness Fact Label aims to create a transparent mechanism that allows users to assess the reliability and integrity of AI systems. This initiative aligns with the growing demand for accountability and transparency in AI, reinforcing the necessity for standards that address ethical considerations and consumer protection.

As ETRI continues its collaboration with ISO and IEC, the establishment of these standards is expected to play a pivotal role in shaping the future landscape of AI technology, ensuring its responsible and trustworthy deployment worldwide.