Qualcomm has disclosed its latest advancements in the AI datacenter sector, showcasing two new accelerators, the AI200 and AI250, along with rack-scale systems designed for efficient inferencing workloads. This announcement marks a strategic move by the company to penetrate the competitive AI market.

While specific technical details remain limited, Qualcomm revealed that the AI200 accelerator supports 768 GB of LPDDR memory. In contrast, the AI250 is said to feature a novel memory architecture based on near-memory computing, promising over ten times the effective memory bandwidth and significantly reduced power consumption for AI inference tasks.

The company plans to deliver these accelerators within pre-configured racks that will utilize direct liquid cooling to optimize thermal management, along with PCIe for scalability and Ethernet for expanded connectivity. These racks will also incorporate features aimed at enhancing security for AI workloads, with a total power consumption of 160 kW.

During a previous engagement, Qualcomm”s CEO, Cristiano Amon, hinted at the company”s intention to enter the AI datacenter market with “something unique and disruptive.” This approach is informed by Qualcomm”s expertise in CPU development, focusing on high-performance clusters that operate with minimal power usage. However, the recent announcement does not reference CPUs directly.

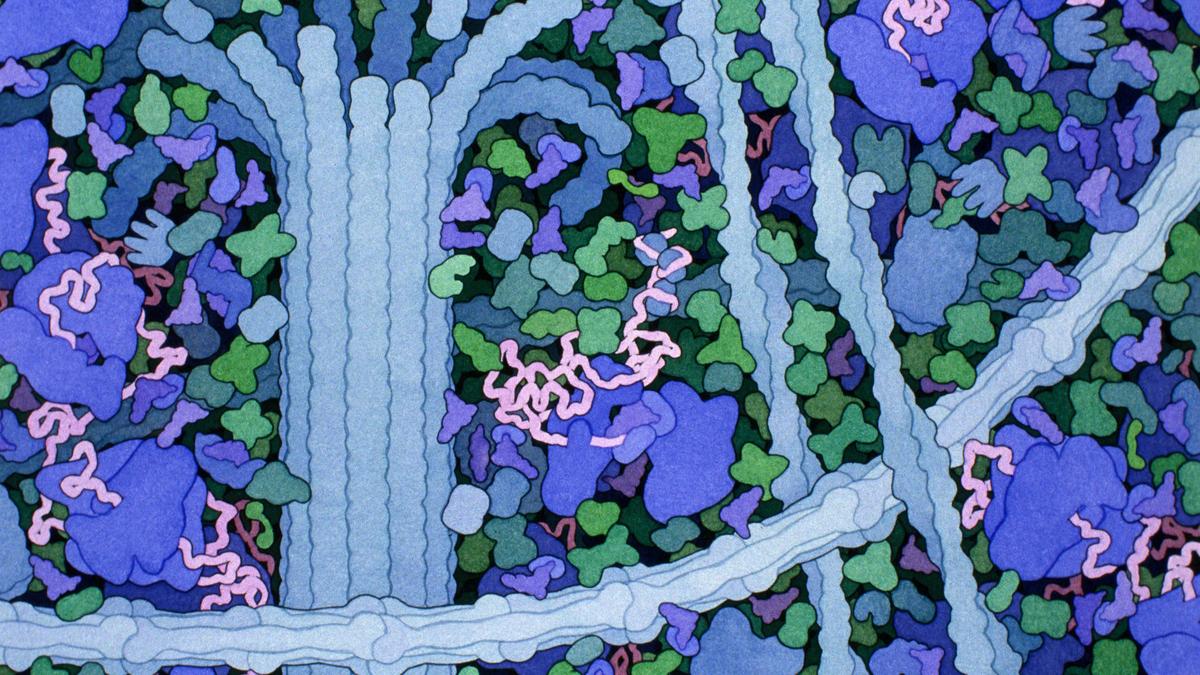

Qualcomm”s statement emphasizes its leadership in neural processing unit (NPU) technology, which is reflected in the capabilities of its Hexagon-branded NPUs, integrated into mobile and laptop processors. The latest Hexagon NPU in the Snapdragon 8 Elite SoC features multiple scalar and vector accelerators and supports various precision formats.

Another significant aspect of Qualcomm”s new offerings is the focus on rack-level performance and enhanced memory capacity, which are critical for rapid generative AI inference while maintaining cost efficiency. This addresses several key challenges faced by AI operators, including energy costs and cooling requirements, as well as memory availability for running multiple models.

The AI200″s impressive memory capacity exceeds that of leading competitors like Nvidia and AMD, suggesting that Qualcomm”s products may provide greater inferencing capabilities with reduced resource demands. This could be particularly appealing to AI operators as they look to expand their workloads.

In a notable development, Qualcomm announced that the Saudi AI company Humain plans to utilize its AI200 and AI250 rack solutions, targeting a significant power capacity starting in 2026 to deliver AI inference services both locally and globally. However, the AI250 is not expected to be available until 2027, casting uncertainty on the specifics of its capabilities and competitive positioning.

Despite the lack of detailed information, Qualcomm”s latest announcement signifies the company”s renewed interest in the datacenter market following previous unsuccessful ventures focused on CPUs. Market reactions have been positive, with Qualcomm”s stock price rising by 11 percent following the news.