The landscape of artificial intelligence deepfakes has undergone a significant transformation, particularly in the realm of audio manipulation. A recent report from NCC Group, a cybersecurity firm, reveals that advancements since 2020 have led to the emergence of real-time audio deepfakes, which can be generated using accessible tools and inexpensive hardware.

This innovative technique, dubbed “deepfake vishing,” employs AI to recreate an individual”s voice in real-time. According to Pablo Alobera, a managing security consultant at NCC Group, the deepfake tool can be initiated with a simple button press after training. Alobera explains, “We created a front end, a web page, with a start button. You just click start, and it starts working.”

While NCC Group has not released its real-time voice deepfake tool to the public, the research paper includes an audio sample that showcases its convincing capabilities, with minimal latency in activation. Notably, the input audio quality during the demonstration was subpar, yet the output remained credible, indicating that the tool could function effectively with standard microphones found in laptops and smartphones.

Although audio deepfakes are not a new concept, existing technologies required longer pre-recording times, making them less effective. Prior attempts at creating AI voice deepfakes suffered from delays that could alert victims if conversations deviated from expected scripts. However, NCC Group”s tool circumvents these issues, allowing for real-time impersonation. Alobera noted that with client consent, the firm successfully employed the voice changer alongside caller ID spoofing to mimic individuals, stating, “Nearly all times we called, it worked. The target believed we were the person we were impersonating.”

The demonstration”s significance lies in its use of open-source tools and widely available hardware, rather than relying on third-party services. Although optimal performance is achieved with high-end graphics processing units, testing on a laptop equipped with an Nvidia RTX A1000 yielded a voice deepfake with only a half-second delay.

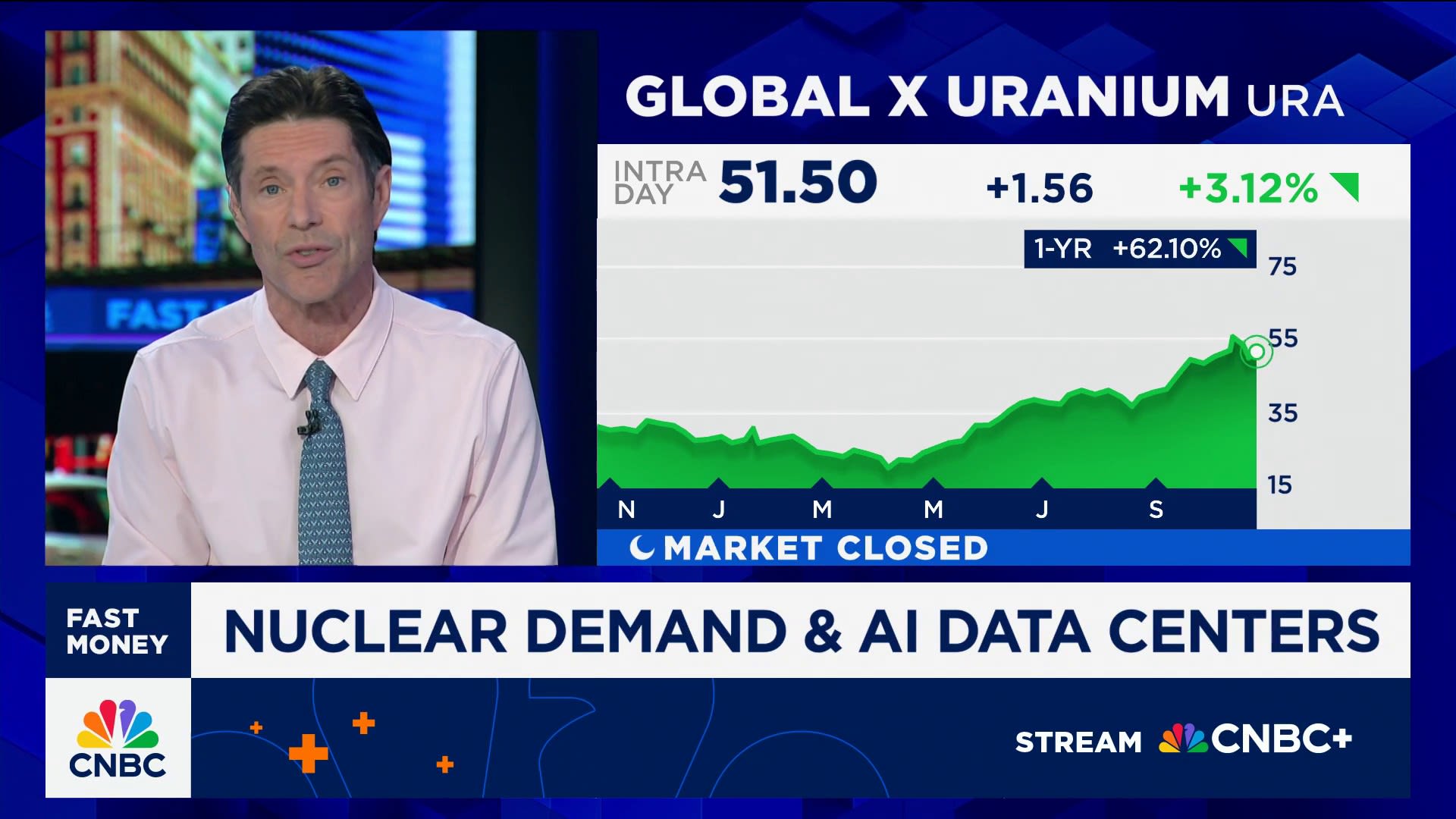

The implications of NCC Group”s advancements in real-time audio deepfakes may soon extend beyond audio, as video deepfakes are rapidly gaining traction across platforms like TikTok, YouTube, and Instagram. This rise is bolstered by the introduction of new AI models such as Alibaba”s WAN 2.2 Animate and Google”s Gemini Flash 2.5 Image, which enhance the ability to deepfake virtually anyone in any setting.

Trevor Wiseman, founder of the AI cybersecurity consultancy The Circuit, reports instances where both companies and individuals have fallen victim to video deepfakes. In one notable case, a company was misled during the hiring process and unwittingly shipped a laptop to a fraudulent location.

Despite the technological marvels of these video deepfakes, they still encounter certain limitations. Unlike NCC Group”s audio deepfake, current video deepfakes struggle to deliver high-quality results in real time, often displaying noticeable discrepancies in emotional synchronization. Wiseman points out that even the latest video deepfakes may present mismatched facial expressions and vocal tones, indicating deception: “If they”re excited but they have no emotion on their face, it”s fake.”

Nevertheless, the technology has reached a level where it can deceive the majority of people most of the time. Wiseman suggests that new authentication methods are necessary for individuals and organizations to verify identities without relying solely on voice or video communications. He humorously notes, “You know, I”m a baseball fan. They always have signals. It sounds corny, but in the day we live in, you”ve got to come up with something that you can use to say if this is real, or not.”